What Is Shadow AI? A Managing Partner's Read on the Risk Sitting Inside Your Firm

Key Takeaways

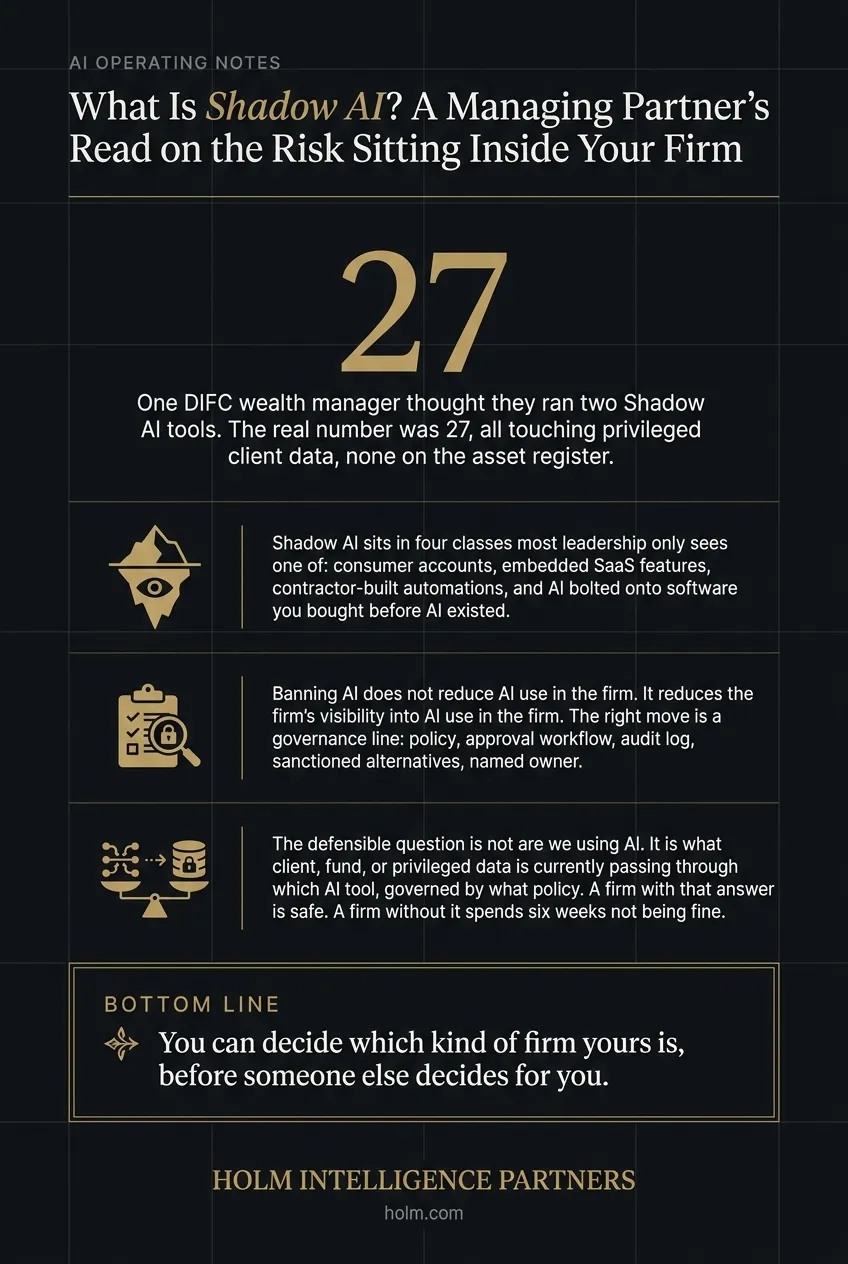

- One DIFC wealth manager thought they ran two AI tools. The real number was 27, all touching privileged client data, none on the asset register.

- Shadow AI sits in four classes most leadership only sees one of: consumer accounts, embedded SaaS features, contractor-built automations, and AI bolted onto software you bought before AI existed.

- Banning AI does not reduce AI use in the firm. It reduces the firm's visibility into AI use in the firm. The right move is a governance line: policy, approval workflow, audit log, sanctioned alternatives, named owner.

- The defensible question is not are we using AI. It is what client, fund, or privileged data is currently passing through which AI tool, governed by what policy. A firm with that answer is safe. A firm without it spends six weeks not being fine.

- You can decide which kind of firm yours is, before someone else decides for you.

The first time I saw it, the firm was running 27 different AI tools and nobody had approved any of them.

I was three weeks into an AI Operating Audit at a DIFC wealth manager. The Managing Partner asked me a simple question over coffee on the second floor of their building: how many AI tools is the firm actually running?

He thought the answer was two. ChatGPT, the company-licensed Copilot. Maybe three if you counted the AI feature their CRM had recently shipped.

The real number was 27.

Two partners had personal Claude accounts they paid for out of pocket and used for IM drafting. The compliance team had quietly licensed a Gemini Workspace add-on for KYC checks. The marketing person was running Perplexity through her browser for prospect research. The IT contractor had built a custom workflow that pasted client correspondence into a self-hosted Llama model for summarization. A junior analyst was using a free voice-AI on his phone to transcribe partner calls into the deal-management system.

Every one of those tools touched client data. Privileged data. Discretionary mandate data. Holdings, correspondence, valuations, intent.

None had been approved by the Managing Partner. None had a data-handling clause in their licence. None showed up on the firm's IT asset register. As far as the partnership was concerned, none of them existed.

That's Shadow AI. And almost every regulated mid-market firm I look at runs more of it than leadership thinks.

What Shadow AI actually is (operator-grade definition)

Shadow AI is any artificial intelligence tool, model, subscription, or embedded feature in use across a firm that has not been formally approved, governed, or risk-assessed by leadership.

That definition matters in two ways. The first word, any, is broader than most firms recognize. Shadow AI is not just employees using ChatGPT on their personal device. It's also:

- AI features baked into the SaaS you already pay for (Microsoft 365 Copilot, Salesforce Einstein, HubSpot's AI assistant, Adobe Firefly, Zoom's auto-summary) that get switched on without anyone reading the data-use clause.

- AI-powered Chrome extensions and browser tools installed individually across hundreds of devices.

- Automations built by a contractor or junior analyst that quietly call an external LLM API every time a form gets filled.

- Voice-transcription apps recording partner calls.

- Image-generation tools used by marketing for client decks.

- Code-completion tools used by developers that send your codebase to a third-party model.

The second word, governance, is what separates Shadow AI from sanctioned AI. The same ChatGPT account, used by the same analyst, becomes sanctioned AI the moment leadership has named it, approved its data-handling terms, written a usage policy, and built it into the firm's risk register. It becomes Shadow AI the moment someone uses it without those four steps.

Short version: Shadow AI is not a technology problem. It's a governance problem.

Why does Shadow AI specifically threaten regulated firms?

I work with wealth managers, multi-family offices, private equity funds, corporate services firms, and corporate law practices. Five sectors. Different daily work. Same exposure profile.

What every one of them shares is fiduciary or regulatory obligations over data that, if it leaked, would not just be embarrassing. It would trigger a regulator inquiry, an LP DDQ failure, an audit qualification, or a malpractice claim.

Shadow AI is dangerous in those firms in a way it is not in, say, a marketing agency or a SaaS startup. Three reasons.

First, the leak path is invisible. A partner pasting an IC memo into ChatGPT looks like productivity to that partner. They get a faster summary. The work proceeds. Nothing breaks. The firm's logs show no anomaly. There is no breach event. There is just a fact: that the contents of the IC memo are now in OpenAI's logs, possibly used for training, possibly accessible to whoever inherits the OpenAI account if the partner leaves. The exposure happens silently and persists indefinitely.

Second, the regulatory question is not theoretical. The DFSA, the FSRA, the SEC, the FCA, BaFin, and every other financial-services regulator I track is now asking firms how AI is being used, where client data goes, and who governs it. The question has already shown up in DDQ templates from major LPs and on auditor checklists. A firm that cannot answer the question with a real document, not "we use ChatGPT carefully," is exposed.

Third, the malpractice scenario is real. I'm aware of at least one DIFC law firm where an associate ran a redline of a confidential M&A document through a public generative AI tool, and the resulting suggestions ended up referenced in a similar transaction the firm was advising on the other side. The firm settled quietly. The associate left. The post-mortem found that nobody at the firm had ever told anyone they couldn't use these tools, because nobody at the firm had ever thought about it.

If you're a Managing Partner in any of the five sectors I named, this is your problem. It is not your CTO's problem. There's no software you can buy that prevents an analyst from copying text into a browser window. The only thing that fixes Shadow AI is governance.

What are the four classes of Shadow AI in mid-market firms?

Every firm I audit has all four classes running. Most leadership teams only recognize the first one.

Class 1: Consumer AI accounts. Individual employees with personal ChatGPT, Claude, Gemini, Perplexity, or similar consumer-grade subscriptions paid out of pocket or through a personal credit card. These tools are not bound by enterprise data-handling terms. Whatever the employee pastes in is potentially used for training. The firm has no visibility, no log, no control.

This is the class most leadership teams already know about and have informally tried to ban. The ban does not work. I have never audited a firm where the consumer-AI ban was actually effective. Employees use these tools because they make work measurably faster, and the cost of getting caught is zero.

Class 2: Embedded AI in approved SaaS. Your firm pays for Microsoft 365, Salesforce, HubSpot, Zoom, Adobe Creative Cloud, DocuSign, Notion, Slack, or any of fifty other platforms. Each of these has, in the last 18 months, shipped at least one AI feature: Copilot, Einstein, Breeze, AI Companion, Firefly, Doc AI, Q&A, Magic Compose, AI Search.

These features are turned on by default in most cases. The data they ingest, your emails, your contacts, your contracts, your client comms, is processed by the vendor's AI infrastructure under the SaaS contract's data-use clause. Some contracts say the data is not used for training. Some say it might be. Almost none of the firms I audit have actually read the clause.

This is the class leadership recognizes least. Because the feature is "inside the tool we already approved," it does not feel like a new exposure. It is.

Class 3: Contractor-built automations. A developer or operations contractor was asked to build a workflow. They built it. The workflow includes an LLM API call somewhere, to summarize an email, classify a ticket, extract data from a document, draft a response.

The contractor left. The workflow keeps running. Nobody at the firm knows it makes an external API call. Nobody knows what data is being sent. Nobody has the API key in their inventory. The vendor on the other end has a contractual relationship with a person who no longer works at the firm.

This class is the most invisible and the most dangerous. The other three classes leave a trail in usage logs or browser histories. Contractor-built automations run as scheduled jobs nobody reviews.

Class 4: AI in software you bought before AI existed. Your CRM, ERP, accounting software, legal practice management, or compliance platform was selected three years ago. The vendor has since updated it with AI features. Your firm received an email about it once. Nobody opened the email.

Those features are now live in production. They are processing your client data. They are not covered by any internal policy because the policy was written before the features existed.

How do you measure your Shadow AI exposure surface?

The number you want, as a Managing Partner, is not "are we using AI?" That question is decided. The number you want is: what client, fund, or privileged data is currently passing through which AI tool, governed by what policy?

That's a defensible question. A regulator, an LP, an auditor, or a client can ask it. A firm with the answer is safe. A firm without the answer is exposed.

The measurement is not as hard as it sounds. The AI Operating Audit method HIP runs takes about three to ten hours of time from your leadership team and produces the answer for the firm. The structure of the measurement, simplified:

Step 1. Tool inventory. List every AI tool, subscription, browser extension, and embedded feature in use across the firm. Use three discovery channels in parallel: IT asset register (catches the formally licensed), expense-report search (catches Class 1 consumer subscriptions), and a structured employee survey (catches the rest). Most firms find 4-5x more tools than they expected.

Step 2. Data classification per tool. For each tool, identify what data class can possibly flow through it: public information, internal operational data, client PII, fund-flow data, privileged or confidential matter, regulated data (DFSA, FSRA, SEC, GDPR). Mark every tool against the highest-sensitivity class it could touch.

Step 3. Vendor data-handling read. For each tool, read the actual contract. Specifically: does the vendor train on your data? Does the vendor retain logs? Where is the data hosted geographically? Is there a Data Processing Agreement in place? Are sub-processors disclosed?

Step 4. Per-tool verdict: Keep, Fix, or Kill.

- Keep if the tool is governed, data-handling is appropriate, and the value it produces justifies the residual risk.

- Fix if the tool produces real value but needs governance work: enterprise license, DPA, usage policy, audit log, or a redaction layer in front of it.

- Kill if the tool's value does not justify the data exposure even after a Fix, or if the vendor's terms are unfit for the data class.

Step 5. Build the governance line. Once the Keep / Fix / Kill verdict is done per tool, the firm has a clean inventory. Around that inventory, the governance line gets installed: an AI usage policy, a tool-approval workflow for new requests, an audit log, a refresh cadence (quarterly is right for most mid-market firms), and a named owner, usually the COO or General Counsel, sometimes a Fractional CAIO if the firm has gone the AI Operating Partner route.

That's the measurement. The output is a document a Managing Partner can hand to a regulator, an LP, or an auditor.

What happens when you ignore Shadow AI?

Three scenarios I have either seen or know about firsthand. None of them are hypothetical.

Scenario A: The LP DDQ failure. A mid-cap PE fund I know of was raising Fund IV. A new institutional LP added an AI-usage question to its DDQ template in late 2025. The fund did not have an answer. The IR team improvised one. Two weeks later the LP's compliance counsel asked for the underlying policy document and tool inventory referenced in the answer. There was no underlying document. The LP did not commit. The fund's IR director described it to me as "the most expensive missing PDF of my career."

Scenario B: The regulator inquiry. A DIFC-licensed wealth manager received a routine inquiry from the DFSA in early 2026 asking the firm to describe its use of generative AI tools, including any third-party tools used by employees, and to confirm what client data, if any, had been processed through those tools. The inquiry was not enforcement action. It was information-gathering. The firm spent six weeks producing an answer because the answer did not exist on day zero. It exists now. The Managing Partner described the process as "the most uncomfortable thing we have done as a firm in five years."

Scenario C: The screenshot leak. A junior associate at a corporate law firm posted a redacted-but-recognizable snippet of an AI-generated draft on LinkedIn as an example of how she was speeding up her work. A client saw it. The client identified the matter. The firm's relationship with that client is ongoing but cooler. The associate is still at the firm. The redaction was good. The recognition still happened.

These are not catastrophic outcomes. None of them involves a data breach in the formal sense. All of them involve material business damage: capital not raised, regulator goodwill spent, client trust diminished. All of them were preventable with a governance line that took the firm three to six weeks to build.

What does the right governance line look like (and why it isn't a ban)?

The wrong response to Shadow AI is to ban it. I've watched firms try. They issue an email. They have IT block ChatGPT.com at the firewall. They ask employees to confirm in an annual training that they will not use AI tools.

Two weeks later, the analysts are using a different AI tool. Or using ChatGPT from their phone. Or using a personal hotspot. The ban did not reduce AI use in the firm. It reduced the firm's visibility into AI use in the firm.

The right governance line does the opposite. It surfaces Shadow AI, brings it under management, and gives employees a sanctioned alternative for the work they were already going to do.

Five components.

-

A short, plain-English AI usage policy that says: what tools are approved, what data classes are not allowed in any AI tool, how to request approval for a new tool, and who the named owner is. One page. Updated quarterly. Read by every employee at onboarding.

-

A tool-approval workflow with a defined turnaround time. If an analyst wants to use a new AI tool, they fill out a one-page form, the COO or General Counsel reviews it within five business days, and the answer is either yes-with-conditions or no-with-reason. Both answers are written.

-

An audit log of approved tools, data flowing through them, vendor terms, and renewal dates. Reviewed quarterly. Owned by the firm secretary or operations lead.

-

A sanctioned-alternative path for the highest-volume use cases. Most Shadow AI use in regulated firms is for one of: document drafting, meeting summarization, research, and email drafting. If the firm provides an enterprise-grade tool for each of these four cases with proper data-handling terms, 80% of Shadow AI use disappears voluntarily because the sanctioned tool is just better.

-

A named owner. In firms under 100 employees, this is usually the COO or General Counsel adding 5-10% of their time. In firms over 200 employees or in highly regulated contexts, it is increasingly a Fractional CAIO: a senior AI advisor on retainer who owns the policy, the audit cadence, and the new-tool decisions. We see roughly half of our wealth-management and PE clients land on the Fractional CAIO model within twelve months of the initial governance line going in.

The next step

If you're a Managing Partner reading this, you have one of three honest reactions.

The first reaction is that none of this applies to you because your firm is small enough that you can see what every employee is doing. In my experience, this is almost always wrong, but the way to find out is to ask the question. Ask your direct reports, in writing, what AI tools they personally use and what client or firm data they have put into any of them. The honest answers will surprise you.

Second reaction: this applies, but you have it under control because you wrote a policy. Open the policy and check the last revision date. If it is more than six months old, it is out of date. The tools have changed, the embedded features have changed, the vendor terms have changed. A policy from 18 months ago describes a different threat surface.

Third reaction: this applies and you do not have it under control. The AI Operating Audit HIP runs is the structured way to find out exactly where you stand. It takes three to ten hours of leadership time, runs over two to six weeks, and produces the governance line as one of its outputs. Fixed scope, fixed price, from $15,000. The Audit is the starting point for almost every governance engagement we have ever taken on.

You can also start by applying to work with HIP directly if you already know the exposure is real and want to move. If you want a structured read first, take the free AI Quick Audit: about thirteen minutes, twenty-seven structured questions, a personalized report read personally by Josef.

Shadow AI is not going to slow down. The tools are getting more powerful, more invisible, and more embedded in the software your firm already runs. The governance question is going to keep escalating from "should we have a policy?" to "what does our policy say?" to "show us your audit log." Most firms are going to get one of those questions in the next twelve months from someone with standing.

The firms that have the answer will be fine. The firms that don't will spend six weeks not being fine, the way the ones I described above spent six weeks not being fine.

You can decide which kind of firm yours is, before someone else decides for you.

Frequently asked questions

What is an example of Shadow AI? A wealth-management partner pastes an Investment Memo into a personal ChatGPT account to get a faster summary. The firm has no log of this happening, no record of the data going out, and no contract with OpenAI governing what happens to the data afterwards. That's Shadow AI in its most common form. Less obvious examples: a developer's GitHub Copilot subscription sending fragments of your codebase to the model, an automated workflow built by a former contractor that still calls an external LLM API, or the AI feature in your CRM that quietly summarizes every email it processes.

What are the risks of Shadow AI? The main risk is unmanaged data exposure: client PII, fund data, privileged work, or proprietary analysis flowing through AI tools that the firm has not vetted, with vendor terms the firm has not read. The downstream consequences include regulator inquiry failures, LP DDQ failures, auditor qualifications, malpractice exposure, and reputational damage if a screenshot or output is recognized externally. The secondary risk is decision-quality: when AI usage is undocumented, the firm cannot tell if any of its AI use is actually producing value or just generating activity.

How do you detect Shadow AI in your firm? The fastest detection uses three parallel channels: review the IT asset register for any AI-flagged tools, run an expense-report keyword search for "ChatGPT," "Claude," "Copilot," "OpenAI," "Anthropic," "Perplexity," and "Gemini" across the last twelve months of credit-card and reimbursement claims, and circulate a one-page employee survey asking each person to list the AI tools they personally use for work and what data classes they touch. Most firms find four to five times more tools than they expected.

What is the difference between Shadow AI and sanctioned AI? The same tool can be either. The difference is governance: a sanctioned AI tool has a named owner, a written usage policy, a vendor contract with appropriate data-handling terms, and an entry in the firm's audit log. Shadow AI is any AI tool in use that lacks one or more of those four elements. Banning AI does not move tools from shadow to sanctioned; it just pushes them further into the shadow.

Who owns Shadow AI governance in a mid-market firm? In firms under 100 employees, ownership usually sits with the COO or General Counsel as a 5-10% time addition. In firms over 200 employees or in highly regulated contexts (wealth management, multi-family offices, PE funds, corporate law), ownership increasingly sits with a Fractional Chief AI Officer: a senior AI advisor on retainer who owns the policy, the quarterly audit cadence, and new-tool decisions. The Managing Partner stays accountable for the decision but does not run the function.

How long does it take to fix Shadow AI in a regulated firm? The governance line itself (policy, approval workflow, audit log, named owner) takes three to six weeks to install for a firm of 50 to 500 employees. The full clean-up of Shadow AI use takes longer, typically three to six months, because it depends on employees migrating from their shadow tools to the sanctioned alternatives. The reduction is dramatic in months two and three once sanctioned alternatives are good enough that employees prefer them.

Infographic

Frequently Asked Questions

- What is an example of Shadow AI?

- A partner pastes an IC memo into a personal ChatGPT account to get a faster summary. The firm has no log, no record of the data going out, and no contract governing what OpenAI does with it. Less obvious examples: a developer's Copilot sending codebase fragments to a model, a contractor-built workflow that still calls an external LLM API, or the AI feature in your CRM quietly summarizing every email it touches.

- What are the risks of Shadow AI in a regulated firm?

- Unmanaged data exposure is the main one: client PII, fund data, privileged work, or proprietary analysis flowing through tools the firm has not vetted, under vendor terms nobody has read. Downstream you get regulator inquiry failures, LP DDQ failures, auditor qualifications, malpractice exposure, and reputational damage if an output is recognized externally. Secondary risk: when AI use is undocumented, you cannot tell what is producing value and what is just activity.

- How do you detect Shadow AI in your firm?

- Three parallel channels. Review the IT asset register for AI-flagged tools. Run an expense-report keyword search for ChatGPT, Claude, Copilot, OpenAI, Anthropic, Perplexity, and Gemini across the last twelve months. Circulate a one-page survey asking every employee to list the AI tools they use and the data classes those tools touch. Most firms find four to five times more tools than they expected.

- What is the difference between Shadow AI and sanctioned AI?

- The same tool can be either. Sanctioned AI has a named owner, a written usage policy, a vendor contract with appropriate data-handling terms, and an entry in the firm's audit log. Shadow AI is any AI tool in use that lacks one or more of those four. Banning AI does not move tools from shadow to sanctioned; it just pushes them further into the shadow.

- Who owns Shadow AI governance in a mid-market firm?

- Under 100 employees, usually the COO or General Counsel, adding 5 to 10 percent of their time. Over 200 employees or in highly regulated contexts (wealth management, multi-family offices, PE, corporate law), it is increasingly a Fractional Chief AI Officer on retainer who owns the policy, the quarterly audit cadence, and new-tool decisions. The Managing Partner stays accountable but does not run the function.

- How long does it take to fix Shadow AI?

- The governance line itself (policy, approval workflow, audit log, named owner) takes three to six weeks to install for a firm of 50 to 500 employees. Full clean-up of Shadow AI use takes three to six months, because employees have to migrate from their shadow tools to the sanctioned alternatives. The reduction is dramatic in months two and three once the sanctioned alternatives are actually better.